The enterprise AI conversation has shifted. A year ago, the dominant question was "how do we get our teams using AI?" Today, the question CIOs and CDOs are asking is more pointed: "why is our AI only helping individuals when we need it transforming operations?"

That distinction is the fault line separating AI copilots from AI agents, and understanding it is now a strategic necessity for enterprise technology leaders.

The difference between an AI copilot and an AI agent is not marketing spin. It is a fundamental architectural distinction in how AI systems are designed to interact with humans, data, and workflows.

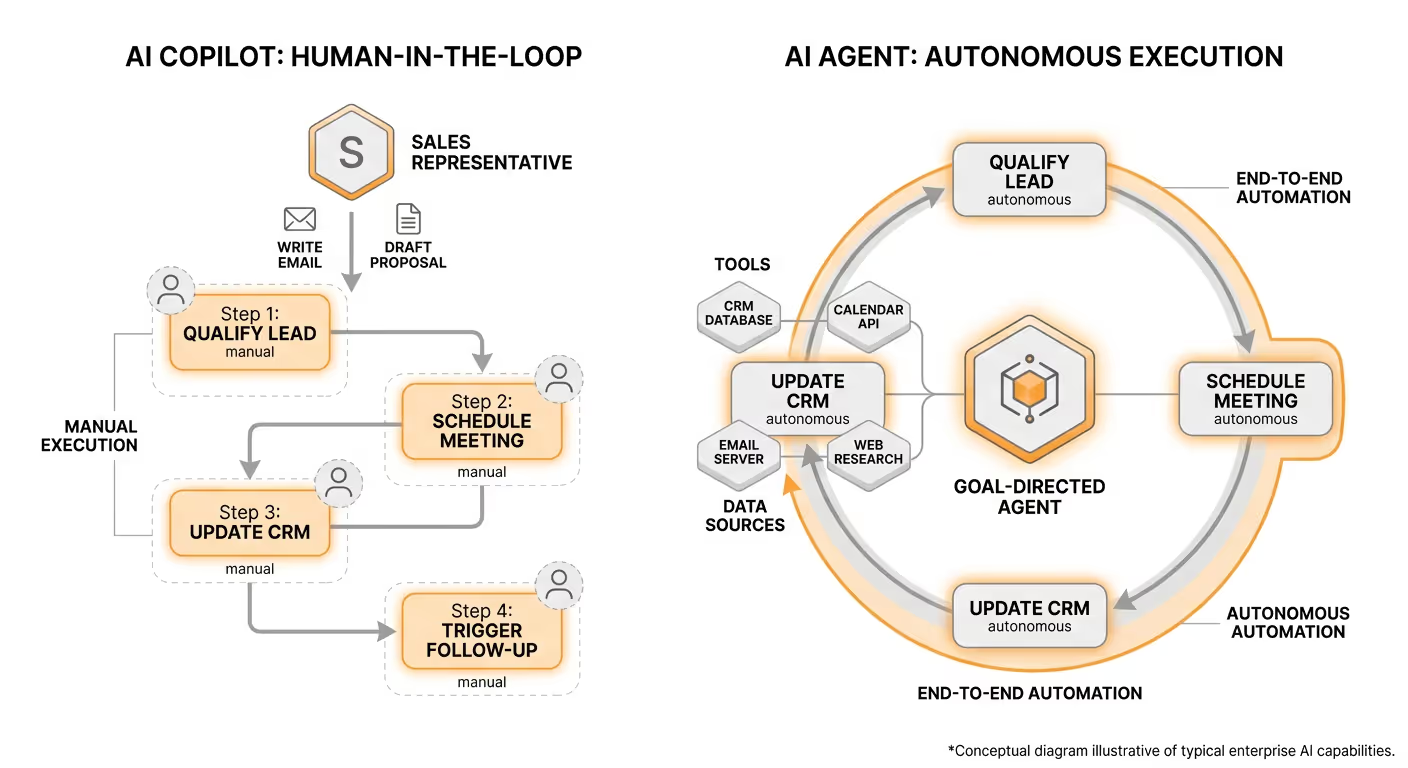

An AI copilot is a prompt-response assistant. It waits for a human to ask something, generates a response, and stops. Microsoft 365 Copilot is the most familiar example: it summarizes emails, drafts documents, and answers questions. The human remains the operator at every step. The AI augments what you do; it does not do things independently. These systems typically offer a 5-10% improvement in individual employee productivity, which is meaningful but bounded.

An AI agent is a goal-directed system. You give it an objective, and it plans, reasons, and executes a sequence of actions to achieve it, using tools, querying data sources, and making decisions without a human steering each step. The difference in business impact is dramatic. AI agents can improve structured enterprise workflows by more than 30%, and a copilot that helps a sales representative write a better email is simply not in the same category as an agent that qualifies leads, schedules meetings, updates the CRM, and triggers follow-up sequences without the representative touching the keyboard.

Gartner makes this distinction precise. The most common misconception is referring to AI assistants as agents, a misunderstanding known as "agentwashing." AI assistants are the precursor to agentic AI. They simplify tasks and interactions for users but depend on human input and do not operate independently.

The market data on this transition is striking. Forty percent of enterprise applications will be integrated with task-specific AI agents by 2026, up from less than 5% today, according to Gartner. The research firm says the rise of agentic AI will mark one of the fastest transformations in enterprise technology since the adoption of the public cloud.

McKinsey's 2025 State of AI survey captures where enterprises currently sit in this transition. Twenty-three percent of respondents report their organizations are scaling an agentic AI system somewhere in their enterprises, and an additional 39 percent say they have begun experimenting with AI agents. That means a majority of large enterprises are now actively engaged with agentic AI, not just watching from the sidelines.

The economic stakes reinforce the urgency. McKinsey estimates that agentic AI productivity gains could unlock up to $2.9 trillion in economic value by 2030. Meanwhile, 74% of companies still report they have yet to show tangible value from their broader AI investments, even as 94% of global business leaders believe AI is critical to their five-year success. The gap between investment and measurable impact is precisely the gap that autonomous agents are designed to close. For a structured look at how to bridge that gap, the Data Leader's Playbook for Building an Agentic Enterprise offers a practical framework for moving from copilot-era thinking to agent-era execution.

Understanding why enterprises are hitting a ceiling with copilots requires looking at the architecture, not just the feature set.

Microsoft Copilot runs on Microsoft's cloud infrastructure. Your prompts, your documents, your enterprise data, all of it flows through Microsoft's shared services to reach the AI models. For many organizations, this was an acceptable trade-off when Copilot was handling individual productivity tasks. It is becoming an unacceptable one as AI is asked to touch more sensitive data and execute more consequential workflows.

The security record here is worth examining directly. In mid-2025, researchers disclosed EchoLeak (CVE-2025-32711). A novel attack technique named EchoLeak was characterized as a "zero-click" AI vulnerability that allows bad actors to exfiltrate sensitive data from Microsoft 365 Copilot's context without any user interaction. The critical-rated vulnerability was assigned a CVSS score of 9.3. Potentially exposed information included anything within Copilot's access scope, such as chat logs, OneDrive files, SharePoint content, Teams messages, and other preloaded organizational data.

Then, in January 2026, a separate product logic bug emerged. A code error in Microsoft 365 Copilot Chat allowed the AI to read and summarize emails from users' Sent Items and Drafts folders that were marked with confidentiality sensitivity labels, despite DLP policies being configured to block AI processing of those emails. Affected content included business agreements, legal communications, governmental inquiries, and protected health information.

The structural lesson from both incidents is the same. Every security control that was supposed to prevent unauthorized AI processing, including sensitivity labels, DLP, and access restrictions, lived inside the same platform as the AI itself. When the platform broke, everything broke. The incident revealed that traditional DLP frameworks were not designed for AI systems that index folders in the background without user action.

This is not a bug-patching problem. It is an architectural one. The 7 reasons why ChatGPT and Copilot put your enterprise at risk goes deeper on the structural vulnerabilities that shared-infrastructure AI creates for regulated organizations.

The U.S. House has set a strict ban on congressional staffers' use of Microsoft Copilot. "The Microsoft Copilot application has been deemed by the Office of Cybersecurity to be a risk to users due to the threat of leaking House data to non-House approved cloud services," the guidance stated. The European Parliament's IT department has taken similar steps. The European Parliament's IT department reportedly blocked built-in AI features on staff devices, citing concerns that AI tools could upload confidential correspondence to the cloud. These are not isolated decisions. They are leading indicators for regulated industries enterprise-wide.

When people ask for copilot AI agent examples, the contrast is instructive. Consider two scenarios in healthcare and finance.

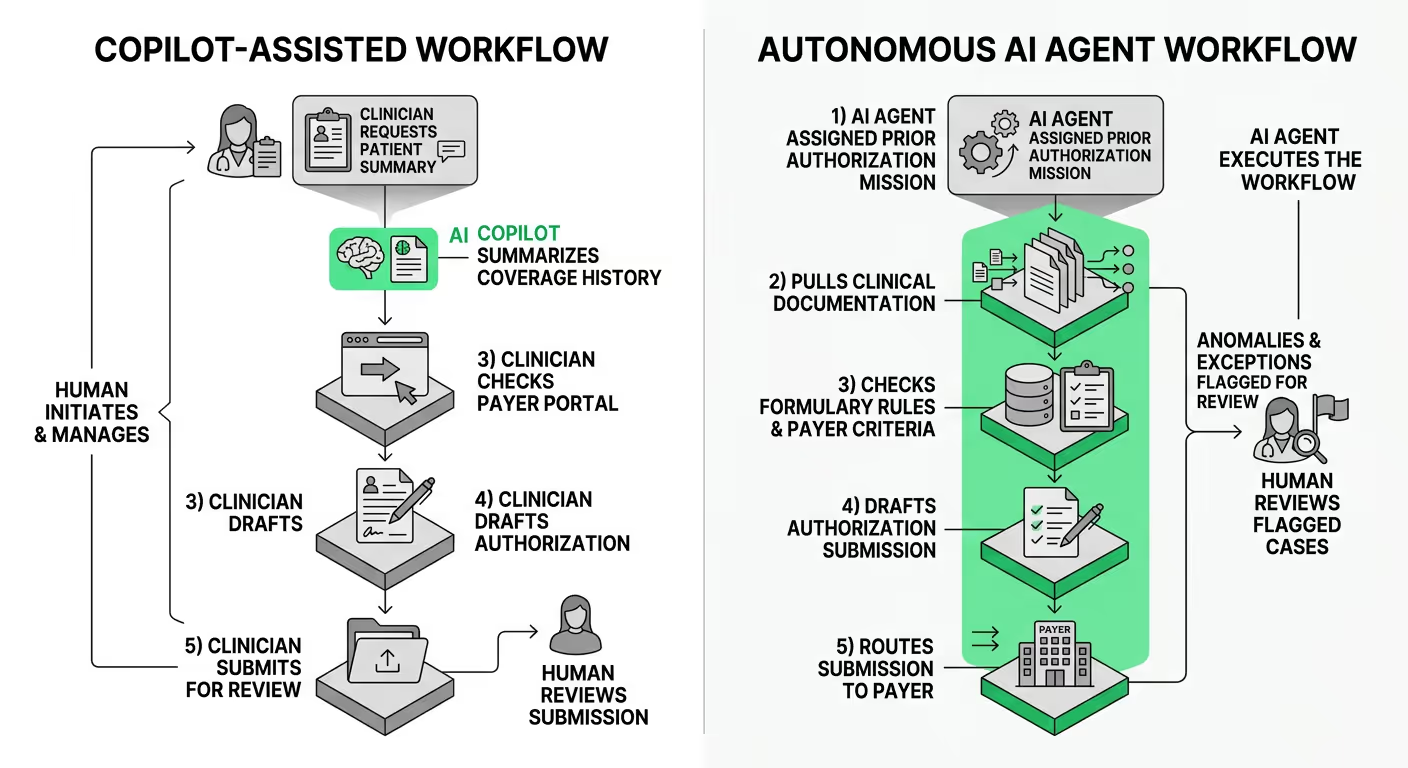

A prior authorization workflow with a copilot looks like this: a clinician asks Copilot to summarize a patient's coverage history, gets a summary, manually checks the payer portal, drafts a request, and sends it for review. The AI assisted one step. The human ran the workflow.

With an autonomous AI agent, the mission is assigned at the workflow level: process prior authorization requests for this patient cohort, flag anomalies for clinical review, and submit to payers. The agent pulls relevant clinical documentation, checks formulary rules, cross-references payer criteria, drafts the authorization submission, and routes it appropriately. A human reviews the flagged cases. The AI executed the workflow.

The difference between "what is the difference between a copilot and an AI agent" in abstract terms, and in practical enterprise terms, is exactly this: one helps you do the task; the other does the task under your oversight. For a deeper look at how this plays out across clinical workflows, how to use agentic AI in healthcare covers the specific patterns, governance considerations, and deployment requirements that regulated health systems need to evaluate.

In financial services, the same pattern applies. An invoice reconciliation agent does not wait for an accounts payable analyst to ask a question. It monitors incoming invoices, matches against POs, flags discrepancies, escalates exceptions, and updates the ERP. The analyst's attention is redirected to exceptions that genuinely require human judgment, not to routine matching.

Despite 70% of Fortune 500 companies piloting Microsoft 365 Copilot, most remain in restricted pilots rather than enterprise-wide deployments. Forrester's Q1 2026 analysis confirms most organizations are not scaling past pilot mode. The barriers are not enthusiasm; they are governance, security, and infrastructure.

Gartner warns that more than 40% of agent projects will fail by 2027. The root causes are consistent: organizations treat agent deployment as a technology problem when it is fundamentally an organizational and infrastructure one. Enterprises attempting to build autonomous agents on top of general-purpose copilot platforms lack the orchestration, governance, and data control layer required to take agents into production.

There is also the compliance dimension, which is intensifying. Europe issued €2.3 billion in GDPR fines in 2025 alone, a 38% year-over-year increase. The EU AI Act becomes fully enforceable in August 2026. For healthcare, finance, and government enterprises, running AI workflows on external cloud infrastructure is not just a security question. It is a regulatory exposure question.

For organizations evaluating whether to go beyond copilot-style AI, the infrastructure requirements for production-grade autonomous agents are distinct from what copilot platforms provide. A robust implementation requires:

This is the infrastructure gap that explains why enterprises searching for an "ai copilot alternative" keep arriving at the same conclusion: the platform underneath the agent matters as much as the agent itself.

This is the precise inflection point Shakudo was built for.

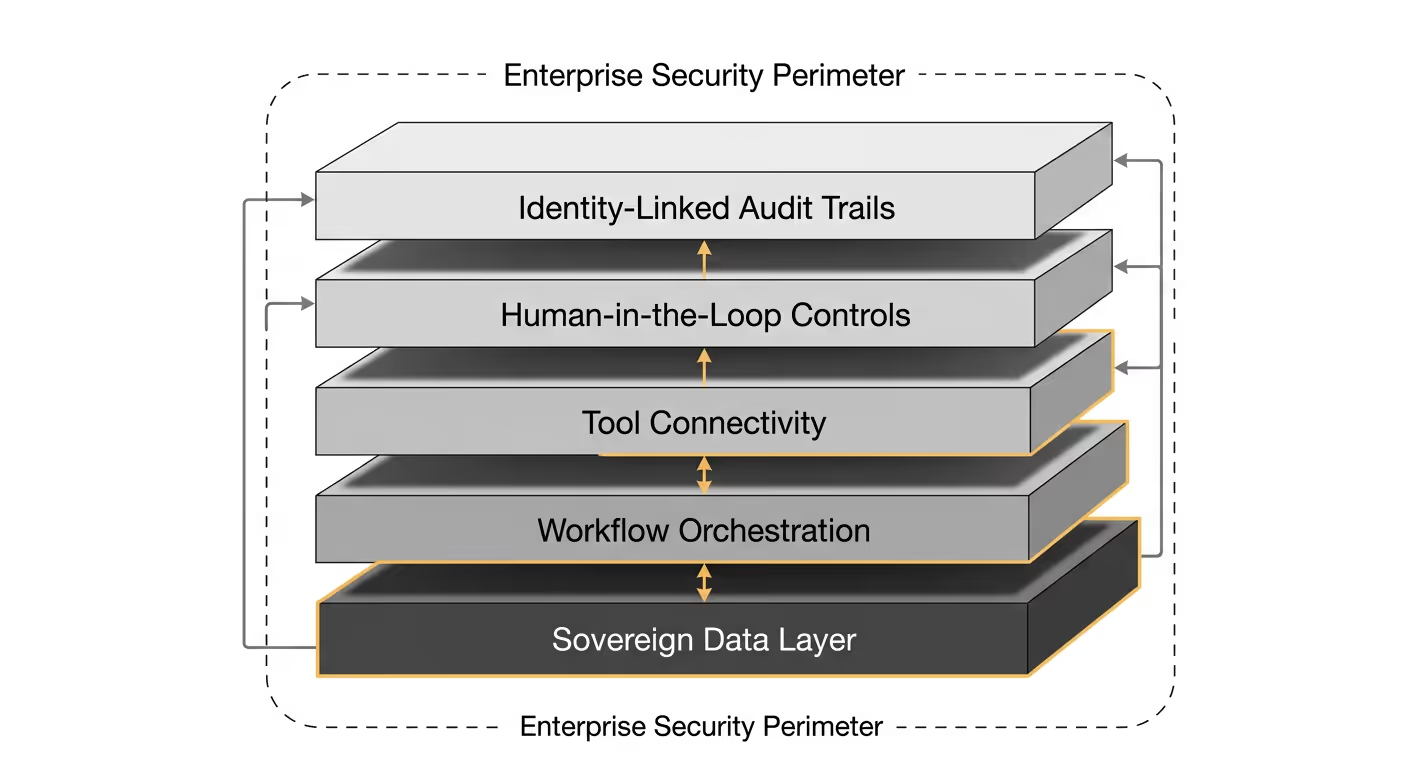

Shakudo is the AI operating system for the enterprise, deploying entirely within an organization's own cloud VPC (AWS, Azure, GCP) or on-premise infrastructure. Every query, every prompt, every output stays inside the customer's perimeter. External LLMs can be used with zero-retention and zero-training guarantees. The platform is the sovereign foundation; the question is what runs on it.

Kaji is Shakudo's enterprise AI agent, the intelligence layer that operates within an organization's already-deployed Shakudo environment. Where Copilot waits to be asked, Kaji executes. Enterprises give Kaji complex missions — surfacing data insights, automating compliance monitoring, orchestrating multi-step workflows across their tech stack — and Kaji executes them autonomously, with over 200 prebuilt connections to data, engineering, and business tools. Critically, Kaji works where teams already collaborate: Slack, Teams, Mattermost. There is no new interface to learn.

The human-in-the-loop design is not an afterthought. Kaji pauses and requests approval before high-stakes or irreversible actions, which addresses the governance concern that has blocked agentic AI adoption in regulated industries. This is not an architectural compromise; it is what separates agents that can reach production from proof-of-concepts that cannot.

Shakudo's AI Gateway adds the governance layer that platform-hosted AI structurally cannot provide: it strips PII and PHI from payloads before they reach any external model, filters sensitive fields from agent responses before they leave the VPC, and maintains a permanent, identity-linked audit trail for compliance. When a code error hit the Microsoft platform, every control failed simultaneously — because those controls lived inside the same system as the AI. Shakudo's architecture makes that failure mode structurally impossible.

For enterprise architects and technology leaders evaluating "ai agent vs copilot" tradeoffs, the conversation is no longer theoretical. Gartner analysts warn that CIOs have just three to six months to define their AI agent strategies or risk ceding ground to faster-moving competitors.

The productivity ceiling of assisted AI is real and documented. The security record of hyperscaler-hosted AI in regulated environments is increasingly difficult to defend to a board or a regulator. And the economic case for autonomous agents — not just copilots that augment individuals, but agents that transform workflows — is now supported by a growing body of enterprise evidence.

The question is no longer whether to move beyond assisted AI. It is how to do so without surrendering the data sovereignty that regulated enterprises cannot afford to give up.

If your organization is evaluating that transition, Kaji is built exactly for this moment.

%201.svg)