The AI agent market is moving fast. OpenClaw has captured attention, NVIDIA used GTC 2026 to push agentic AI further into the mainstream, and projects like Hermes Agent show how quickly the category is expanding. NVIDIA's NemoClaw announcement made the shift even clearer. The conversation has moved beyond whether AI agents will persist and toward which architectures can hold up in real enterprise environments.

Many teams stumble on that distinction. A personal AI assistant can be compelling, fast, and even secure for one operator, but enterprise teams evaluate a different checklist: user isolation, approval flows, memory boundaries, secrets management, auditability, observability, and deployment control. Those requirements shape the deployment from day one.

OpenClaw's own README is unusually clear about its design center. It calls OpenClaw a personal AI assistant and says, "If you want a personal, single-user assistant that feels local, fast, and always-on, this is it." Its official security guidance is just as explicit. The trust model centers on one trusted operator boundary rather than a multi-tenant environment with conflicting users and shared risk.

That positioning tells you exactly which questions matter once a CIO, CISO, or platform lead starts evaluating deployment. Can users share one instance safely? Can memory stay partitioned by person or team? Can approvals stay ergonomic without becoming a security hole? Can secrets stay out of configs, sandboxes, and logs? Can the system be observed, audited, and governed without wrapping it in a second platform?

Those questions are already showing up in OpenClaw's public issue tracker, from multi-user memory partition support and multi-user session isolation to SecretRef support in sandbox environments and plaintext secrets appearing in config audit logs.

To be fair, OpenClaw is not standing still. The product now documents exec approvals, secrets management, logging and OpenTelemetry export, and multi-agent routing. NVIDIA's NemoClaw packaging also shows that the ecosystem is actively adding more security and privacy scaffolding around claw-style agents.

That shift makes the comparison more important, because buyers now care less about demo capability and more about the trust model a product was built around. When the official docs start from a personal assistant boundary, enterprises still have to solve the jump from single operator to governed organizational runtime.

This is also where teams start asking for time-bounded approvals, tighter exec policy controls, partitioned memory, and cleaner secrets handling. The same concerns show up in responsible AI guidance. The NIST AI RMF Core emphasizes governing, measuring, and managing AI risk across the lifecycle. Microsoft's Azure guide for OpenClaw makes the operational point in plain language: teams want self-hosted agents on infrastructure they control, with security boundaries they can explain to IT. Enterprise rollout requires a higher bar than a strong local demo.

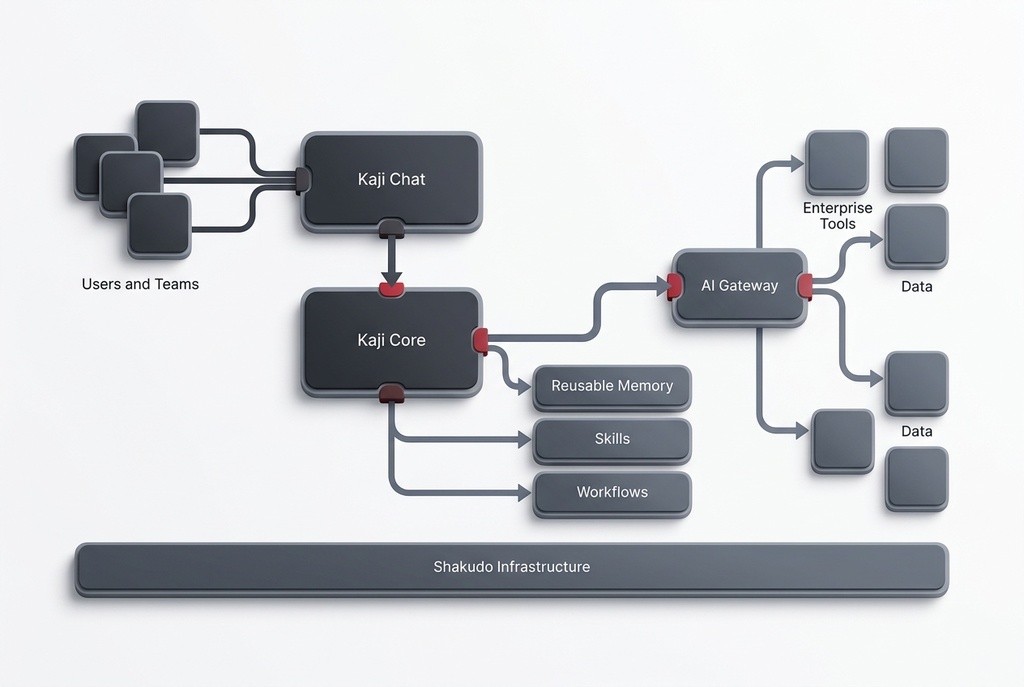

Kaji starts from the enterprise assumption. On its public product page, Shakudo positions Kaji as agentic AI that plans, delegates, and delivers inside your cloud. That framing matters because it shifts the category from assistant to governed runtime.

Kaji Chat gives teams a controlled surface for requests, review, approvals, outputs, and collaboration. Enterprise users want a place to initiate work, see what the system is doing, and intervene when needed.

Kaji Core turns goals into work. It can break tasks into steps, call tools, run workflows, and spin up sub-agents in isolated execution contexts. That operating model matches enterprise teams more closely, because they need durable workspaces, governed tool access, and clear execution boundaries.

Kaji stores knowledge as memory, skills, workflows, and notes that teams can search and reuse across sessions. Enterprise teams need context that survives individual chats and can be applied across people and processes. Shakudo's Knowledge Graph story matters here because organizations need durable context that outlives one operator and one chat.

Kaji works because it sits on Shakudo infrastructure. Enterprises can run it inside their own cloud or private environment, connect models and tools through AI Gateway, and combine best-of-breed components without giving up governance. If you want the broader picture, see Shakudo's guides to AI agent architecture, the enterprise AI agent infrastructure stack, enterprise AI agent production failures, Autonomous Enterprise AI with Kaji and Shakudo AI Gateway, and AI agent vs. copilot.

OpenClaw, NemoClaw, and Hermes Agent are important signals. They show that the market wants agents that persist, automate, and live closer to real work. But they also expose the same tension: what feels powerful for one operator can become brittle once multiple users, sensitive data, approvals, and compliance enter the picture.

OpenClaw belongs in the current wave of self-hosted personal agents. For teams that want to operationalize AI across systems, users, and governance boundaries, Kaji is the stronger fit. It combines Kaji Chat, Kaji Core, reusable memory, workflows, sub-agent orchestration, and Shakudo infrastructure into one governed operating model.

OpenClaw is best understood as a personal AI assistant with active work underway around enterprise needs. Its docs and issue tracker show real progress on approvals, secrets, logging, and multi-agent features, while also highlighting the remaining work around isolation, memory boundaries, and security ergonomics that enterprise buyers care about.

Yes. OpenClaw documents exec approvals, secrets management, logging, OpenTelemetry export, and multi-agent routing. For buyers, the key issue is how those features fit into the overall operating model, and whether that model starts from enterprise governance or from a single-user assistant.

The clearest themes are multi-user isolation, partitioned memory, safe secrets handling, approval ergonomics, auditability, and better policy enforcement. Those concerns show up repeatedly in the official docs, deployment guides, and public GitHub issues.

Kaji is built as a governed runtime. It gives enterprises a controlled operator surface in Kaji Chat, an execution engine in Kaji Core, durable organizational memory, workflows, sub-agents, and infrastructure-native deployment through Shakudo.

NemoClaw is NVIDIA's packaging effort around the OpenClaw ecosystem. It matters because it shows the market is moving from raw agent excitement toward more secure, packaged, operationally usable deployments.

If you are evaluating OpenClaw for enterprise AI, begin with the trust model and operating boundary. Explore Kaji, see how it works with AI Gateway, and request a demo if you want a governed enterprise runtime built to survive real operating conditions.

%201.svg)